{

"info": {

"author": "Dragan Matesic",

"author_email": "dragan.matesic@gmail.com",

"bugtrack_url": null,

"classifiers": [],

"description": "# About\nMain objective is to secure fast deployment of crawler system that will help you setup all that you need to \nget started with data mining interactively over cmd/terminal.\nConcept is based on long working experience and mistakes that have been learned over the years in data mining \nand database arhitecture. It could save you a lot of time and effort using this framework.\nCurrently works on Win OS.\n\n# Installation\nMost important thing is that you have python 3 installed on your machine. \n\n**With virtual environment (recommended)**

\nTo be able to make virtual environment you will need to ```pip3.7 install virtualenv```\nLocation of your virtual environment on WinOS shoud be ```C:\\Users\\YourUsername\\Envs```\nThen create virtual environment ```py -3.7 -m virtualenv Envs/crawler_framework```

\nBy default now your virtual environment should be active if it isn't activate your virtual \nenvironment by writing ```workon crawler_framework```

\nNow you can install library ```pip install crawler_framework```\n\n**Without virtual environment**

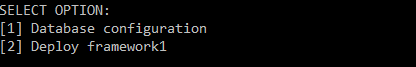

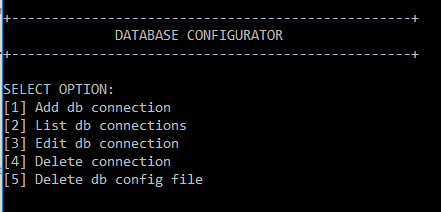

\nJust open cmd and write ```pip3.7 install crawler_framework```. This option is ok if you have only one version \nof python installed on your system. But if you have more python version installed and you decide to go with this \noption you will have to finish some additional question's during configuration of crawler framework. \nLike that it will be ensured that everything works ether way.\n\n# Setup\n## Database configuration\nBefore we can deploy anything we must setup connection strings for one or more database \nservers that we are going to use.\nCurrently supported are PostgreSQL, Oracle and Microsoft SQL Server.\n\nIt is recommended to create database on your server that will only be used by framework. \n\n\nOpen cmd/terminal and write ```config.py```. If everything goes well you should see this options below. \nIt is possible that program asks for some additional information if you have more than one python interpreter \ninstalled on your machine and you did not use virtual environment. But it will be required only once.\n\n\n\nCreate all database connection's that you think you will use, from database where you will deploy crawler_framework \nto database where you will store data etc. by selecting option number 1 and then database option number one.\n\n\n\n### Hints\n**Microsoft SQL Server**

\n If you are using default option be sure to define in ODBC Data sources administrator dsn name that will have \n default database that will be used for framework. If you are using pymssql you will define server_ip, port and database \n during database configuration stage.\n\n## Deploying framework\nOpen cmd/terminal and write ```config.py```. Select option 2 (Deploy framework) and then select option from \nthe list of connections you created that is going to be used for deployment. This will deploy table structure in selected database \non selected server connection. In our case we will deploy it on PostgreSQL localhost server.\n\n## Setup tor expert bundle\nYou can skip this if you are not planing to use tor as your proxy provider but only public proxies.\n\nOpen cmd/terminal and write ```config.py```. Select option 4 (Tor setup). Then from to setup option select option 1(Install), \nthis will automatically install tor expert bundle with all necessary directories and subdirectories. \n\nIf you have already have installed and then changed default options then this will reset those options back to default just like some kind of reinstall.\n\nSetup option 2 (Tor options) allows you to change some of defaults when constructing our tor network such as \nhow many tor instances should run in same time or how long time must pass before changing and identity of tor instance.\n\n## Starting proxy server\nProxy server is multifunctional program that acquires new proxies(crawlers), test proxies, creates tor network etc.\n\nOpen cmd/terminal and write ```config.py```. Select option 3 (Run proxy server).\n### Suboptions\nFrom suboptions you can select suboption 0 to run all both public proxy and tor service or suboption 1 to run only public proxy gatherer or\nsuboption 2 to run only tor service.

\nSuboption 2 is great if you want to run tor service on another pc inside your network. \nBy doing so you can increase number of tor that will run but it will not hog your local CPU and RAM.\n

\nAll data will be saved in database where you have deployed crawler_framewok.\nIf you didn't made deployment then this will probably end with exceptions.\n\n\n",

"description_content_type": "text/markdown",

"docs_url": null,

"download_url": "",

"downloads": {

"last_day": -1,

"last_month": -1,

"last_week": -1

},

"home_page": "",

"keywords": "",

"license": "MIT",

"maintainer": "",

"maintainer_email": "",

"name": "crawler-framework",

"package_url": "https://pypi.org/project/crawler-framework/",

"platform": "",

"project_url": "https://pypi.org/project/crawler-framework/",

"project_urls": null,

"release_url": "https://pypi.org/project/crawler-framework/0.1.8/",

"requires_dist": [

"SQLAlchemy",

"pandas",

"requests",

"bs4",

"stem",

"pymssql",

"pyodbc",

"psycopg2",

"cx-oracle",

"aiohttp-socks",

"aiohttp",

"psutil",

"virtualenv",

"lxml",

"pysocks"

],

"requires_python": "",

"summary": "Framework for crawling",

"version": "0.1.8"

},

"last_serial": 6005732,

"releases": {

"0.1": [

{

"comment_text": "",

"digests": {

"md5": "f4ffaba03195ca06541ea71e3dee9a56",

"sha256": "bda5e1e9365354b74b2f652c59d2cc5a9b9da53152aa95daa63ab012485de490"

},

"downloads": -1,

"filename": "crawler_framework-0.1-py3-none-any.whl",

"has_sig": false,

"md5_digest": "f4ffaba03195ca06541ea71e3dee9a56",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 4629,

"upload_time": "2019-07-05T06:10:51",

"url": "https://files.pythonhosted.org/packages/28/d1/d9893388d22d67cc79f48e4d72a12e6ecd3a336687440efd3d42069ba3f2/crawler_framework-0.1-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "4f947e01c05637ebdd3b3c812f9ac005",

"sha256": "178ea81e7ccc60725efdb998f141f539bb27f1d8e6fbbc30bfe30e46dd7d9af7"

},

"downloads": -1,

"filename": "crawler_framework-0.1.tar.gz",

"has_sig": false,

"md5_digest": "4f947e01c05637ebdd3b3c812f9ac005",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 3815,

"upload_time": "2019-07-05T06:10:53",

"url": "https://files.pythonhosted.org/packages/c0/fa/8b1003b5dd6e233ec97aaabcefd323ec52230bccbd047e81e49e9970a26b/crawler_framework-0.1.tar.gz"

}

],

"0.1.1": [

{

"comment_text": "",

"digests": {

"md5": "ae7682dd6df9776d6378e5c835a8f225",

"sha256": "d10f880eae53b78341be48af1f3ddcf489fcc6b213955ae4f9782d118a7c30e8"

},

"downloads": -1,

"filename": "crawler_framework-0.1.1.tar.gz",

"has_sig": false,

"md5_digest": "ae7682dd6df9776d6378e5c835a8f225",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 1511,

"upload_time": "2019-07-05T08:50:32",

"url": "https://files.pythonhosted.org/packages/16/70/d0754e131e382be57063310f4deeb89be47dd3998fb5e114cc148955cb03/crawler_framework-0.1.1.tar.gz"

}

],

"0.1.2": [

{

"comment_text": "",

"digests": {

"md5": "2f39e215f3b27645432c31ae1224c6b0",

"sha256": "9be7c0ec566370aba5c877ee8edb00ac63c8551c23d8556b904e086d45c36baf"

},

"downloads": -1,

"filename": "crawler_framework-0.1.2-py3-none-any.whl",

"has_sig": false,

"md5_digest": "2f39e215f3b27645432c31ae1224c6b0",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 7294,

"upload_time": "2019-07-05T09:34:11",

"url": "https://files.pythonhosted.org/packages/4e/2c/516746c256ca0220d847de0f06ad8bc5771d3bda4ba51aa9a25e3842ce0f/crawler_framework-0.1.2-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "7cd482df99540dc22c8fbbe480524444",

"sha256": "7446468e2b59cf8acaed1e55fd4ed82eb6d9a466998b8e888f63540632358c69"

},

"downloads": -1,

"filename": "crawler_framework-0.1.2.tar.gz",

"has_sig": false,

"md5_digest": "7cd482df99540dc22c8fbbe480524444",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 3914,

"upload_time": "2019-07-05T09:34:12",

"url": "https://files.pythonhosted.org/packages/d6/7d/63976ea3110620846f13b524afa2a0645c989f49d56066c95969dcc3c6f9/crawler_framework-0.1.2.tar.gz"

}

],

"0.1.3": [

{

"comment_text": "",

"digests": {

"md5": "763defd90a662aab0f10ce71117913d8",

"sha256": "0967d5b15e574a9d8f20e25e6e6d6bf4b3bf8b0029b059b5f86e682a7912776f"

},

"downloads": -1,

"filename": "crawler_framework-0.1.3-py3-none-any.whl",

"has_sig": false,

"md5_digest": "763defd90a662aab0f10ce71117913d8",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 4702,

"upload_time": "2019-07-05T10:08:55",

"url": "https://files.pythonhosted.org/packages/77/79/68f9cf1adab7efbdb15841bb91d02dfb78fe9fa36bde5e5eab373f6b5bc3/crawler_framework-0.1.3-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "9e7a97829a91e17b02716f0b6a7aea41",

"sha256": "3b85ae8d265f8390442a93747dbb3b6b5e0ccd77744e6f48db77cfea7656cc15"

},

"downloads": -1,

"filename": "crawler_framework-0.1.3.tar.gz",

"has_sig": false,

"md5_digest": "9e7a97829a91e17b02716f0b6a7aea41",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 3906,

"upload_time": "2019-07-05T10:08:56",

"url": "https://files.pythonhosted.org/packages/dc/03/98fc01547f9fe3b1dc64cfa8e09c04897a0b5c787bbc47bf368c9282ac7f/crawler_framework-0.1.3.tar.gz"

}

],

"0.1.4": [

{

"comment_text": "",

"digests": {

"md5": "f844fc6c2e02621a26c1d1811c36464a",

"sha256": "c69445e4db3f04308e5a635d32baafee6f24e22cd1786ed9b4ba9858cd66eda3"

},

"downloads": -1,

"filename": "crawler_framework-0.1.4-py3-none-any.whl",

"has_sig": false,

"md5_digest": "f844fc6c2e02621a26c1d1811c36464a",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 4636,

"upload_time": "2019-07-05T10:14:24",

"url": "https://files.pythonhosted.org/packages/60/43/d1bc49f3d184db19543b393aebc94242a1ac59219687fadfe5cde20ae688/crawler_framework-0.1.4-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "2ce936bd79fa7d0f16c10591e48a8fd2",

"sha256": "679cd002d06a18c7f12443d015650b784303784892002bae1e606489418c345e"

},

"downloads": -1,

"filename": "crawler_framework-0.1.4.tar.gz",

"has_sig": false,

"md5_digest": "2ce936bd79fa7d0f16c10591e48a8fd2",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 3838,

"upload_time": "2019-07-05T10:14:25",

"url": "https://files.pythonhosted.org/packages/e8/b4/488366c22e0c6a4d82d6b2cb9b6208128a1f9c3c0af0eafa7a310b8c5a94/crawler_framework-0.1.4.tar.gz"

}

],

"0.1.5": [

{

"comment_text": "",

"digests": {

"md5": "1b795017d070fde76a98d970a3ea3854",

"sha256": "60b68019bcce8a9364f70cc474a6904ef3c89d4bcaf20288ae3f9802f337fd9e"

},

"downloads": -1,

"filename": "crawler_framework-0.1.5-py3-none-any.whl",

"has_sig": false,

"md5_digest": "1b795017d070fde76a98d970a3ea3854",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 8650,

"upload_time": "2019-07-12T08:39:32",

"url": "https://files.pythonhosted.org/packages/ff/ca/2c005b1f5141b6464720291e174155b21defddee22e0cf15ee74efe9eabb/crawler_framework-0.1.5-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "09e1f9b5cdd31014aad70263b1dd5249",

"sha256": "2343d1d9d90aafe96ba3e5b69224495e4c61d90aeca5d0533c3fc1309d989ebe"

},

"downloads": -1,

"filename": "crawler_framework-0.1.5.tar.gz",

"has_sig": false,

"md5_digest": "09e1f9b5cdd31014aad70263b1dd5249",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 7398,

"upload_time": "2019-07-12T08:39:34",

"url": "https://files.pythonhosted.org/packages/82/60/9776de9f7e86836ef847ba8e8503ba58a427c53038c1d4c2b15a39aa05fa/crawler_framework-0.1.5.tar.gz"

}

],

"0.1.7": [

{

"comment_text": "",

"digests": {

"md5": "51e9a6af16bb53b626feac4f46ef387f",

"sha256": "b1f39426fafc66687445ba289027b80911144b3380b95457f134d96cae095428"

},

"downloads": -1,

"filename": "crawler_framework-0.1.7-py3-none-any.whl",

"has_sig": false,

"md5_digest": "51e9a6af16bb53b626feac4f46ef387f",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 62435,

"upload_time": "2019-10-05T19:45:14",

"url": "https://files.pythonhosted.org/packages/b7/aa/502331d01e4a02f0f9ccfe6913aa18cbcd11920eb283a8b0910381221722/crawler_framework-0.1.7-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "e3f242ec81e97c44d72fd7702bbe0512",

"sha256": "e6ad4a50c5c701f6ad03abefd0fd8b39f37ef83a0ff89274005e3b9851b763fc"

},

"downloads": -1,

"filename": "crawler_framework-0.1.7.tar.gz",

"has_sig": false,

"md5_digest": "e3f242ec81e97c44d72fd7702bbe0512",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 39767,

"upload_time": "2019-10-05T19:45:16",

"url": "https://files.pythonhosted.org/packages/a8/55/978312970cc4e00cda0195735d19d0f07d1fac9d82f7320370988ce73567/crawler_framework-0.1.7.tar.gz"

}

],

"0.1.8": [

{

"comment_text": "",

"digests": {

"md5": "ece8af7cd55d3ea316be5604dd500c4b",

"sha256": "6e6d1201602c5b0d0e15bc33aa78a161eaea0e1a1742fe33e94f391a3e9ec5b0"

},

"downloads": -1,

"filename": "crawler_framework-0.1.8-py3-none-any.whl",

"has_sig": false,

"md5_digest": "ece8af7cd55d3ea316be5604dd500c4b",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 58454,

"upload_time": "2019-10-21T06:39:12",

"url": "https://files.pythonhosted.org/packages/94/11/5d517419718b0e59f05c8cafe9e885229320d390fb35e6cc4e27cfd310ad/crawler_framework-0.1.8-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "e83dd42d1159fb0caa1e6e78affdbb01",

"sha256": "c186915aa5cf78885658fa9060d8a9c5427bbc27fdda61db4dc847976787e4a9"

},

"downloads": -1,

"filename": "crawler_framework-0.1.8.tar.gz",

"has_sig": false,

"md5_digest": "e83dd42d1159fb0caa1e6e78affdbb01",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 38102,

"upload_time": "2019-10-21T06:39:13",

"url": "https://files.pythonhosted.org/packages/a2/2f/cf8aa921939d7c6f0f55619d3b4d6b0d4cb467abbf844b20af93ccb1ed64/crawler_framework-0.1.8.tar.gz"

}

]

},

"urls": [

{

"comment_text": "",

"digests": {

"md5": "ece8af7cd55d3ea316be5604dd500c4b",

"sha256": "6e6d1201602c5b0d0e15bc33aa78a161eaea0e1a1742fe33e94f391a3e9ec5b0"

},

"downloads": -1,

"filename": "crawler_framework-0.1.8-py3-none-any.whl",

"has_sig": false,

"md5_digest": "ece8af7cd55d3ea316be5604dd500c4b",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": null,

"size": 58454,

"upload_time": "2019-10-21T06:39:12",

"url": "https://files.pythonhosted.org/packages/94/11/5d517419718b0e59f05c8cafe9e885229320d390fb35e6cc4e27cfd310ad/crawler_framework-0.1.8-py3-none-any.whl"

},

{

"comment_text": "",

"digests": {

"md5": "e83dd42d1159fb0caa1e6e78affdbb01",

"sha256": "c186915aa5cf78885658fa9060d8a9c5427bbc27fdda61db4dc847976787e4a9"

},

"downloads": -1,

"filename": "crawler_framework-0.1.8.tar.gz",

"has_sig": false,

"md5_digest": "e83dd42d1159fb0caa1e6e78affdbb01",

"packagetype": "sdist",

"python_version": "source",

"requires_python": null,

"size": 38102,

"upload_time": "2019-10-21T06:39:13",

"url": "https://files.pythonhosted.org/packages/a2/2f/cf8aa921939d7c6f0f55619d3b4d6b0d4cb467abbf844b20af93ccb1ed64/crawler_framework-0.1.8.tar.gz"

}

]

}